AI Governance · May 4, 2026

Building Ethical AI: Best Practices for Post-Deployment Monitoring in Visa Applications

Discover best practices for post-deployment AI monitoring to maintain ethical standards and compliance in visa application assistance.

Why Post-Deployment Monitoring Matters in Visa Tech

The modern visa process increasingly relies on complex AI systems to assess applicant credentials, forecast business viability and ensure compliance with immigration rules. However, without robust ai application monitoring, these systems risk unfair outcomes, hidden bias or technical failures. Continuous oversight helps maintain transparency, builds trust among applicants and safeguards governments from unintended societal impacts.

In this article, we explore how ai application monitoring can be integrated into visa application services—highlighting best practices, key data streams and governance frameworks. Whether you’re an immigration adviser, a policy maker or an entrepreneur preparing your UK Innovator Founder Visa, you’ll discover actionable steps to ensure AI remains ethical, compliant and effective. AI-Powered UK Innovator Visa Application Assistant

Understanding Ethical Risks in AI-Powered Visa Screening

Visa applications hinge on fairness. AI tools can speed up document checks, background analysis and business plan evaluations. Yet they also introduce risks:

– Unintended bias: models may downgrade candidates based on nationality or education.

– Data privacy gaps: sensitive applicant information must be protected at every stage.

– Opaque decision logic: lack of transparency undermines applicant confidence.

– Systemic errors: a model update may trigger new classification mistakes.

Ethical governance demands a lifecycle approach: from design and testing to ai application monitoring once models go live. We must ask: who sees usage logs? How do we flag misclassifications? Which metrics reveal hidden drift?

Key Elements of ai application monitoring for Visa Applications

Effective ai application monitoring covers three core information domains, borrowed from emerging AI governance frameworks.

1. Model Integration and Usage Data

This data shows which AI engines are in use across visa platforms and how they’re integrated:

– Foundations: GPT-based screening or custom rule-based classifiers.

– Deployment channels: web portals, mobile apps or third-party APIs.

– Sector coverage: business viability, background checks or compliance reviews.

By tracking model adoption patterns, regulators can spot concentration risks—say one provider dominating UK visa interviews. Visa service teams can then diversify model sources or adjust thresholds to mitigate systemic bias.

2. Application Usage Insights

Usage logs reveal real-world interactions:

– Guardrail effectiveness: how often terms of use are flagged.

– Misuse reports: patterns in appeal requests signalling false negatives.

– Watermarks & audit trails: tamper-proof logs of model queries and responses.

Continuous user feedback loops strengthen the system. When a candidate challenges an AI decision, that incident becomes a learning opportunity. Over time, you’ll refine screening rules, boosting both accuracy and fairness. Download BP Build Desktop APP

3. Impact and Incident Reporting

This layer focuses on outcomes and near misses:

– Incident registers: detailed reports on classification errors and appeal outcomes.

– Socioeconomic metrics: time-to-decision, approval rates by nationality or sector.

– Resilience tests: can the system handle surges in applications without degradation?

Publishing anonymised incident summaries fosters public trust. Applicants see that the service takes errors seriously, regulators verify compliance, and your team learns where to prioritise improvements.

Roles and Responsibilities in Visa AI Governance

Successful ai application monitoring demands collaboration across stakeholders.

Government and Regulatory Bodies

- Setting standards: define mandatory reporting fields for model performance, error rates and bias assessments.

- Mandating audits: require periodic third-party reviews of AI-driven decision engines.

- Building capacity: train immigration officers and data scientists to interpret monitoring dashboards.

Visa Service Providers

- Transparency commitments: share integration logs with authorities while preserving applicant confidentiality.

- Internal governance: appoint a senior lead responsible for post-deployment oversight.

- Research partnerships: fund studies into long-term societal impacts of AI on migration patterns.

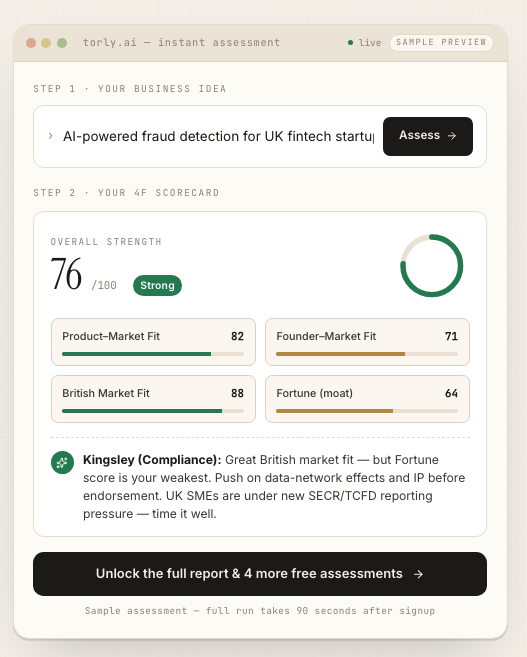

Torly.ai’s evaluation-driven platform streamlines these tasks by offering end-to-end visa readiness analysis, real-time compliance checks and dynamic scoring against endorsing body criteria.

Implementing Best Practices for Ethical Monitoring

Bringing these ideas to life requires concrete policies and technical measures:

- Define clear KPIs: accuracy, fairness, latency, privacy compliance.

- Standardise logging schemas: ensure consistent data capture across tools.

- Adopt privacy-preserving sharing: differential privacy or secure enclaves for regulator access.

- Automate alerts: flag unusual shifts in approval rates or demographic disparities.

- Conduct periodic stress tests: simulate policy changes or high application loads.

These steps embed ai application monitoring into everyday operations, not as an afterthought but as a vital feedback loop.

Midway through your implementation plan, consider how Torly.ai’s multi-layered assessments can integrate seamlessly, providing 24/7 tracking of model performance and actionable remediation suggestions. AI-Powered UK Innovator Visa Application Assistant

Spotlight on Torly.ai’s Monitoring Framework

Torly.ai brings a unique blend of AI reasoning and compliance tooling tailored for the UK Innovator Founder Visa:

- Business Idea Qualification: automated checks for innovation and scalability.

- Background Assessment: analyse applicant expertise and endorsement readiness.

- Gap Identification & Roadmap: targeted recommendations to strengthen your application.

With Torly.ai you gain continuous post-deployment oversight, minimising surprises and maximising approval chances. It’s not just about building a plan; it’s about ensuring your AI stays ethical and effective long after launch. Your AI-powered assistant for UK Innovator Founder Visa business plan preparation

Testimonials

“Using Torly.ai’s monitoring features transformed our application process. The real-time alerts flagged a subtle bias issue before submission, saving us weeks of rewrites.”

— Priya Sharma, Start-up Founder

“Torly.ai’s incident reporting dashboard is a game-changer. We catch misclassifications instantly and adjust our criteria on the fly.”

— Mark Evans, Immigration Consultant

“As a policymaker, I appreciate Torly.ai’s transparent logs. We verify compliance without compromising applicant privacy.”

— Dr Emma Wright, UK Home Office Advisor

Conclusion & Next Steps

Building ethical AI in visa applications isn’t optional. Robust ai application monitoring ensures fairness, protects sensitive data and upholds public trust. By tracking integration data, usage insights and incident reports, you create a virtuous cycle of improvement.

Ready to embed best-in-class monitoring into your visa workflows? Take the next step today. AI-Powered UK Innovator Visa Application Assistant