AI Evaluation and Validation · May 17, 2026

Evaluating AI Accuracy: Torly.ai’s Custom Text Classification Metrics for Visa Application Guidance

Delve into Torly.ai’s rigorous evaluation metrics for custom text classification that ensure precise and reliable Visa application advice.

Why Text Classification Accuracy Is Your Visa Application Guardian

AI is only as good as its metrics. When you seek UK Innovator Founder Visa guidance, misclassifications can cost you time and money. That is why text classification accuracy matters so much. It’s the compass that tells us if our model is precise, reliable and ready for real-world visa advice.

In this article we explore how Azure’s custom text classification evaluation metrics—precision, recall, F1 score and confusion matrix—form the bedrock of rigorous AI assessment. Then we reveal how Torly.ai elevates these metrics into an intelligent visa readiness analyst. Along the way you’ll see concrete steps to improve your model and discover how Torly.ai keeps entrepreneurs on track with ultra-precise visa advice. Enhance text classification accuracy with our AI-Powered UK Innovator Visa Application Assistant

Understanding Precision, Recall and F1 for Text Classification Accuracy

When you train a custom text classifier for visa guidance, you split data into training and test sets. The model learns from the training set and is judged on the test set it’s never seen. Three key metrics measure its success:

-

Precision

How many of the texts your model labels as a given class are correct?

Formula: True Positives / (True Positives + False Positives). -

Recall

Of all the texts that truly belong to a class, how many did the model catch?

Formula: True Positives / (True Positives + False Negatives). -

F1 Score

The harmonic mean of precision and recall. Ideal when you need both.

Formula: 2 × (Precision × Recall) / (Precision + Recall).

Precision, recall and F1 score can be computed at two levels: class-by-class to spot weak areas, and model-level to gauge overall text classification accuracy.

Class-Level vs Model-Level Evaluation

It helps to see metrics in action. Let’s imagine a small dataset of five documents tagged with genres. Here’s how we might count for the “action” class:

• True Positives: documents correctly flagged as action.

• False Positives: non-action docs wrongly tagged as action.

• False Negatives: missed action docs.

If we get 1 TP, 1 FP and 1 FN, then:

- Precision = 1 / (1 + 1) = 0.5

- Recall = 1 / (1 + 1) = 0.5

- F1 Score = 2 × 0.5 × 0.5 / (0.5 + 0.5) = 0.5

At model-level we sum all classes:

- Total TPs, FPs, FNs across every class.

- Then apply the same formulas for overall text classification accuracy.

This dual view shows whether a specific class drags down accuracy, or if the model needs a global tune-up.

The Confusion Matrix: Visualising Missteps

A confusion matrix lays out expected labels versus predicted ones in a grid. Correct predictions sit on the diagonal. Mistakes stray into off-diagonal cells. For multi-label tasks the matrix shows which classes overlap or get confused.

Why it matters for visa guidance:

- Spot classes that frequently swap places (for example “eligibility check” vs “document review”).

- Decide if two similar classes should merge, or if more examples are needed to distinguish them.

- Quickly see if your text classification accuracy suffers from a few bad pairs.

This holistic snapshot helps you focus on the right fixes rather than blind tuning.

Practical Tips to Optimise Text Classification Accuracy

You’ve seen the metrics. Now how do you raise them?

• Ensure each class has at least 15 labelled examples in your training data. Fewer leads to low precision and recall.

• Balance class distribution so your model doesn’t favour over- or under-represented labels.

• Keep training and test sets aligned in class mix; sample bias can skew accuracy.

• Write clearly distinct examples for each class. If two labels share language, the model will wonder which way to go.

These straightforward steps often give your metrics a healthy boost with minimal effort.

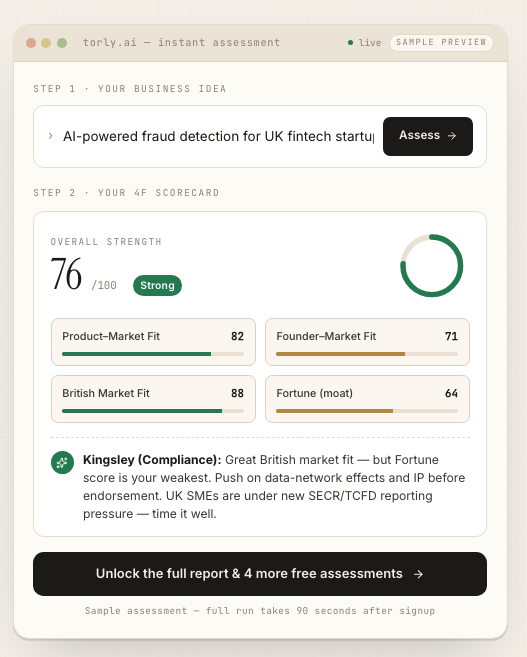

How Torly.ai Harnesses Rigorous Evaluation for Visa Guidance

Traditional visa consultants rely on checklists. Torly.ai goes deeper. It integrates custom text classification metrics to power an Intelligent Visa Readiness Analyst. Here is how it stands out:

- Multi-layered assessments of eligibility rules, founder background and business idea viability.

- Automated document tagging with high text classification accuracy to ensure advice matches Home Office expectations.

- Real-time feedback loops that compare predicted advice against approved applications, refining the model on the fly.

- A step-by-step roadmap built from class-level insights, so you know exactly where to strengthen your application.

Every piece of visa guidance you see is backed by precision, recall and F1 analysis. That means fewer surprises at endorsement stage and more confidence you’ve covered every requirement. Plus, you can access this advanced evaluation in the Torly.ai Desktop APP.

Torly.ai in Action: A Mid-Process Checkpoint

Imagine you’ve drafted your executive summary and initial business plan. The AI tags sections as “innovation pitch,” “market analysis,” or “document checklist.” Any mis-tags trigger targeted suggestions. You fix the glitch, and the system resurrects metrics showing improved precision for “innovation pitch.” That is instant, data-driven reassurance.

At this stage your text classification accuracy jump starts your next iteration, focusing your energy on the exact weak spots. Deepen text classification accuracy via our AI-Powered UK Innovator Visa Application Assistant

Real-World Impact: From Data to Decision

One client had an 80% recall on eligibility criteria but only 60% precision on document compliance advice. Torly.ai’s fine-tuning raised both above 90% in under a week. That meant clearer guidance on supporting letters and CV formatting, leading to endorsement approval on first submission.

By interpreting metrics rather than gut feel, you transform your visa application from guesswork into a systematic process. It is AI you can trust.

Conclusion: Trust Metrics, Trust Torly.ai

Custom text classification accuracy isn’t just a statistic. It is the backbone of reliable visa application guidance. With metrics like precision, recall, F1 score and confusion matrix, you gain clarity on every recommendation. Torly.ai’s Innovator Founder Visa AI Assistant leverages these metrics to give you tailored, data-driven advice 24/7. No blind spots, no guesswork—just higher confidence on your path to UK endorsement.

Optimise text classification accuracy with our AI-Powered UK Innovator Visa Application Assistant